Reddit executives are quietly implementing sweeping changes to content moderation policies as the company prepares for its long-anticipated initial public offering. Internal documents and conversations with former employees reveal a systematic effort to sanitize the platform’s most controversial corners while maintaining the authentic chaos that defines Reddit’s culture.

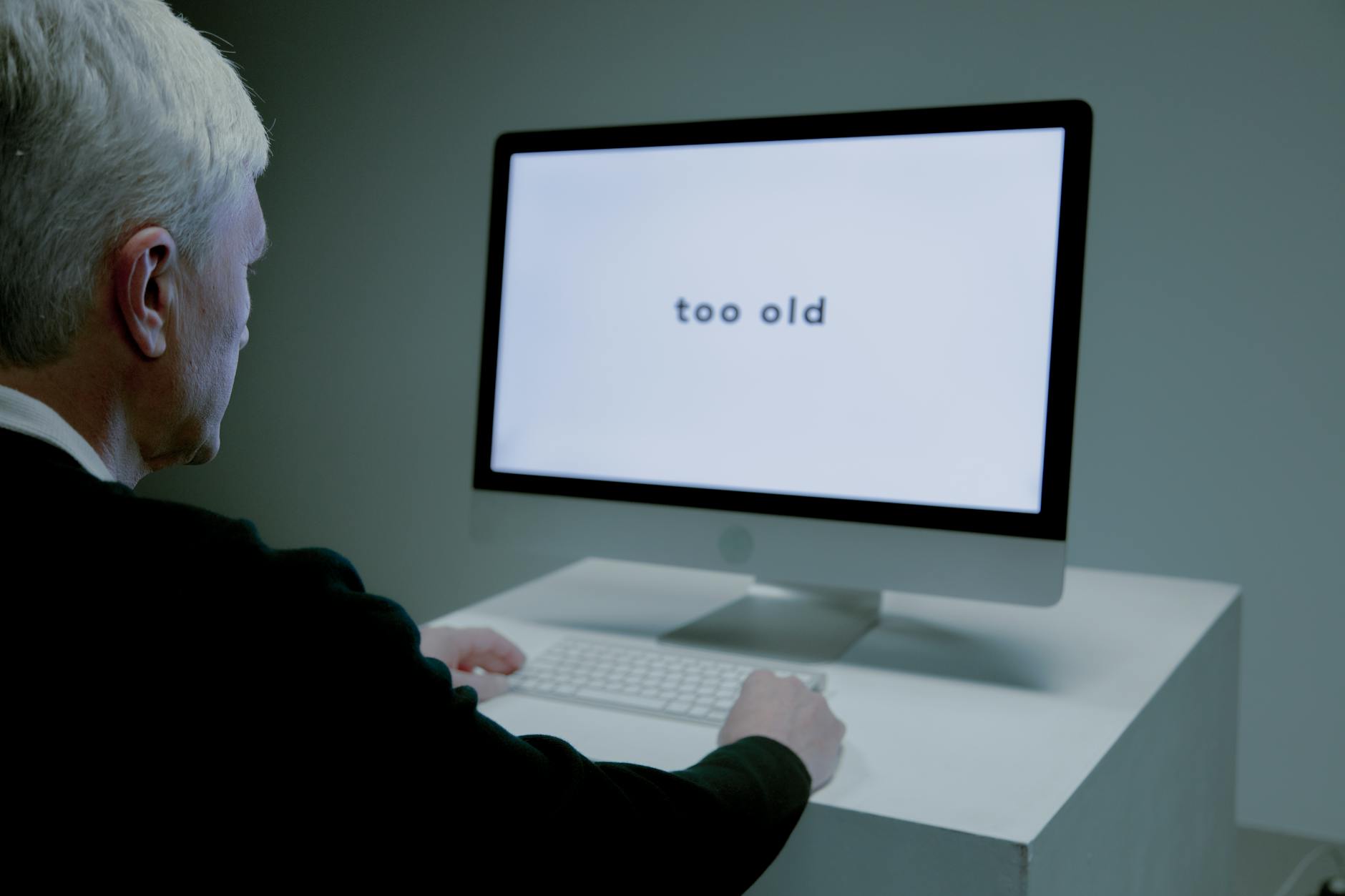

The sixteen-year-old social media platform, which filed confidentially for an IPO in late 2021, faces a delicate balancing act. Investors demand advertiser-friendly content policies, but Reddit’s 430 million monthly active users expect the irreverent discussions and niche communities that built the site’s reputation as “the front page of the internet.”

“Reddit is trying to thread the needle between Wall Street expectations and user authenticity,” says Sarah Chen, a digital media analyst at Wedbush Securities. “They’re essentially performing content surgery while the patient is awake.”

The Cleanup Campaign Behind Closed Doors

Since appointing Drew Vollero as CFO in March 2021, Reddit has accelerated policy changes across its thousands of communities, known as subreddits. The company banned several high-profile controversial subreddits and tightened enforcement around harassment, misinformation, and sexually explicit content.

Recent policy updates include stricter rules around “brigading” – coordinated harassment campaigns that jump between communities – and expanded definitions of hate speech. Reddit also introduced new automated systems to flag potentially problematic content before it gains traction, a significant shift from the platform’s historically hands-off moderation approach.

The changes haven’t gone unnoticed by power users and volunteer moderators who run Reddit’s communities. Several longtime moderators, speaking on condition of anonymity, describe increased pressure to enforce content policies that previously existed only on paper. One moderator of a political discussion subreddit with over 500,000 subscribers says Reddit administrators now regularly review moderation decisions and request explanations for controversial posts that remain live.

“The guidance keeps getting more specific about what crosses the line,” the moderator explains. “Things that would have been fine two years ago now get flagged immediately.”

Advertising Revenue Drives Policy Decisions

Reddit’s advertising business has grown dramatically in recent years, reaching over 100,000 advertising clients by 2022. Major brands including Samsung, Hyundai, and Verizon now run campaigns on the platform, but advertiser concerns about brand safety continue to influence content policy decisions.

The company introduced “brand safety” tools that allow advertisers to exclude their ads from controversial subreddits automatically. However, these tools create financial incentives to moderate aggressively, since demonetized communities generate less revenue for Reddit’s business model.

“Every controversial post that stays up is a potential screenshot that ends up in a brand safety presentation,” explains Marcus Rivera, a former Reddit advertising executive. “The IPO timeline makes this calculation even more urgent.”

Reddit’s approach differs significantly from other social platforms preparing for public markets. Unlike competitors that rely heavily on algorithmic content curation, Reddit’s community-driven model means policy changes must account for thousands of volunteer moderators who enforce rules differently across communities.

The platform has invested heavily in creator economy features, including a new revenue-sharing program for popular contributors and expanded live streaming capabilities. These initiatives mirror successful strategies from other platforms but require consistent content policies to attract mainstream creators and their audiences.

Community Backlash and User Retention Concerns

Policy changes have sparked resistance across Reddit’s user base, particularly in communities that pride themselves on minimal moderation. The r/WallStreetBets community, which gained global attention during the GameStop trading saga, has seen several clashes with Reddit administrators over content that potentially violates financial misinformation policies.

User surveys conducted by independent researchers show growing dissatisfaction with content moderation changes. Approximately 35% of active users report that recent policy updates have negatively affected their Reddit experience, though retention metrics suggest most users adapt to the new environment over time.

“Reddit’s walking a tightrope,” says Jennifer Park, who studies social media governance at Stanford. “Too much moderation kills the culture that makes Reddit unique, but too little moderation kills the IPO dreams.”

Some communities have moved discussions to alternative platforms like Discord or Telegram, though these migrations typically involve only the most dedicated users. Reddit’s unique threading system and established community hierarchies create significant switching costs that benefit the platform during policy transitions.

The company has experimented with community-specific content policies that allow different moderation standards across subreddits, but scalability concerns limit this approach for a public company facing regulatory scrutiny.

Technical Infrastructure Scaling

Behind the policy changes, Reddit has dramatically expanded its content moderation infrastructure. The company now employs over 300 full-time content moderators and safety specialists, up from fewer than 50 in 2019. New machine learning systems can identify potentially problematic content across multiple languages and cultural contexts.

These investments parallel similar moves by other platforms preparing for public markets. How Salesforce’s Latest CRM Updates Are Changing B2B Sales illustrates how enterprise software companies are also adapting their platforms for evolving business requirements.

Reddit’s approach includes partnerships with third-party content analysis firms and expanded legal teams to handle policy enforcement appeals. The platform processes over 10 million moderation actions monthly, requiring sophisticated systems to maintain consistency across diverse communities.

IPO Timeline and Market Pressures

Industry observers expect Reddit to complete its public offering sometime in 2024, pending favorable market conditions. The company’s valuation reached $10 billion during its latest private funding round, but public market reception will likely depend on demonstrating sustainable revenue growth and manageable content moderation costs.

Recent policy changes position Reddit to address investor concerns about regulatory risk and advertiser safety, key factors in social media company valuations. The platform’s diverse community structure provides natural content segmentation that could appeal to institutional investors seeking predictable business models.

However, Reddit’s success ultimately depends on maintaining user engagement while satisfying public company requirements. Early indicators suggest the platform is successfully navigating this transition, with user growth continuing despite policy tightening and increased moderation enforcement.

The coming months will test whether Reddit’s content moderation evolution can satisfy both Wall Street expectations and the passionate user communities that define the platform’s identity. Success could establish a new model for social media companies balancing authentic community culture with public market accountability.

Frequently Asked Questions

How is Reddit changing its content moderation for the IPO?

Reddit is implementing stricter policies around harassment, misinformation, and controversial content while expanding automated moderation systems and human oversight.

Will Reddit’s IPO affect regular users?

Users may notice increased content enforcement and policy changes, but Reddit aims to maintain core community features while meeting public company standards.