Data centers across the globe are undergoing their biggest transformation since the advent of virtualization. Nvidia’s latest AI accelerator chips have triggered a massive infrastructure overhaul that’s forcing cloud providers to rethink everything from power distribution to cooling systems.

The semiconductor giant’s newest generation of AI processors delivers unprecedented computational power for machine learning workloads, but they’re also creating bottlenecks and challenges that extend far beyond raw processing capabilities. Major cloud providers including Amazon Web Services, Microsoft Azure, and Google Cloud are scrambling to redesign their data center architectures to accommodate these power-hungry chips while maintaining the reliability and efficiency their customers demand.

Power and Cooling Challenges Reshape Data Center Design

Traditional data centers weren’t built for the massive power requirements of modern AI chips. While previous generations of servers typically consumed 300-500 watts per unit, configurations with Nvidia’s latest AI accelerators can demand 1,000 watts or more per server.

This dramatic increase in power density is forcing cloud providers to completely reimagine their infrastructure. AWS has begun deploying specialized cooling systems that use liquid cooling directly on chip packages, a departure from the air cooling that has dominated data centers for decades. Microsoft has experimented with immersion cooling, where entire servers are submerged in specially designed cooling fluids.

The power grid implications are equally significant. A single rack of AI-optimized servers can now consume as much electricity as a small neighborhood. This has led cloud providers to seek out data center locations near dedicated power generation facilities and invest heavily in renewable energy projects to offset their carbon footprint.

Google has reportedly invested over $2 billion in new data center infrastructure specifically designed for AI workloads, with facilities featuring advanced power distribution systems that can handle the variable loads these chips create during training and inference operations.

Memory and Storage Architecture Evolution

The computational power of modern AI chips has created a new challenge: keeping them fed with data fast enough to maintain peak performance. Traditional storage hierarchies that worked well for general computing workloads are proving inadequate for AI applications.

Cloud providers are implementing new memory architectures that include massive amounts of high-bandwidth memory directly connected to AI processors. These systems require specialized interconnects that can move terabytes of data per second between processors, memory, and storage systems.

Amazon’s latest AI instances feature custom-designed memory subsystems that can deliver over 900 gigabytes per second of memory bandwidth. This represents a fundamental shift from the memory architectures used in traditional cloud computing, where bandwidth requirements were measured in tens of gigabytes per second.

The storage implications extend beyond just speed. AI model training often requires access to massive datasets that can span petabytes. Cloud providers are deploying new distributed storage systems that can deliver this data to AI processors with minimal latency while maintaining data integrity across thousands of simultaneous operations.

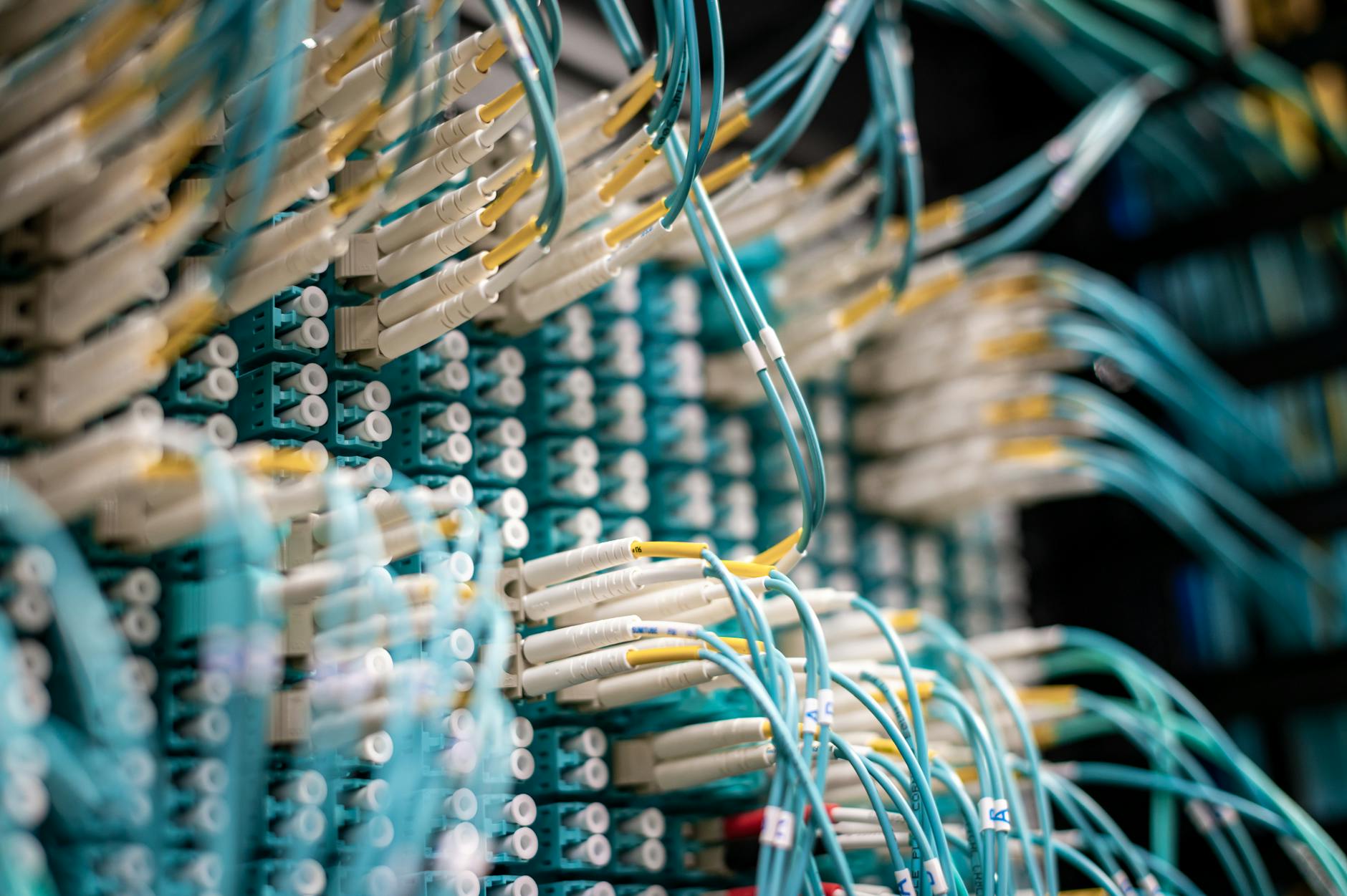

Network Infrastructure Transformation

The networking requirements for AI workloads have pushed cloud providers to develop entirely new approaches to data center networking. Traditional Ethernet-based networks, while adequate for most cloud applications, create bottlenecks when hundreds or thousands of AI processors need to communicate during large-scale training operations.

Major cloud providers are implementing specialized networking fabrics designed specifically for AI workloads. These networks use advanced switching technologies and custom protocols optimized for the communication patterns typical in machine learning operations.

Microsoft has deployed what it calls “AI supercomputer” clusters that use custom networking hardware to connect thousands of AI processors with ultra-low latency connections. These systems require networking equipment that can handle the massive data flows generated when AI models are distributed across multiple processors.

The networking transformation extends to interconnections between data centers as well. Cloud providers are building dedicated fiber optic networks that can support the transfer of trained AI models and massive datasets between facilities in different geographic regions.

Cost and Resource Allocation Challenges

The infrastructure changes required to support modern AI chips are creating new economic models for cloud computing. The high cost of AI-optimized hardware means cloud providers must carefully balance capacity planning with demand forecasting.

Unlike traditional cloud computing where resources can be quickly scaled up or down, AI infrastructure requires significant upfront investments in specialized hardware that may take months to deploy and configure. This has led to new pricing models where customers may need to commit to longer-term contracts for AI computing resources.

The scarcity of advanced AI chips has also created supply chain challenges that ripple through the entire cloud computing ecosystem. Cloud providers are having to work directly with Nvidia and other chip manufacturers to secure allocation of the latest processors, sometimes committing to purchases years in advance.

Some cloud providers are exploring alternative approaches, including partnerships with AI chip startups developing specialized processors for specific types of machine learning workloads. These alternatives could provide more predictable supply chains and potentially better performance for certain applications.

The transformation of cloud computing infrastructure driven by AI chip requirements represents more than just a hardware upgrade. It’s fundamentally changing how cloud providers design, build, and operate their facilities. The investments being made today in power systems, cooling technology, and specialized networking will define the capabilities of cloud computing for the next decade.

As AI applications become more sophisticated and widespread, the pressure on cloud infrastructure will only intensify. The companies that successfully navigate this transition will be positioned to lead the next phase of cloud computing, while those that fail to adapt may find themselves unable to compete in an increasingly AI-driven market.

Frequently Asked Questions

Why do AI chips require different data center infrastructure?

AI chips consume much more power and generate more heat than traditional processors, requiring specialized cooling and power systems.

How are cloud providers adapting to AI chip requirements?

They’re investing in liquid cooling systems, high-bandwidth memory, and custom networking to support AI workloads.