Hollywood’s post-production landscape just shifted dramatically. Adobe’s latest AI-powered video editing suite, featuring generative fill capabilities and automated rotoscoping, is already being deployed by major studios including Paramount and Netflix for upcoming projects. What once required teams of artists working for weeks can now be accomplished in hours.

The technology represents the most significant advancement in video post-production since the transition from film to digital editing. Early adopters report production timelines shrinking by 40-60% for complex visual effects sequences, fundamentally changing how studios approach project budgets and delivery schedules.

Adobe’s Firefly Video AI, integrated into After Effects and Premiere Pro, allows editors to generate missing footage, extend scenes, and create entirely new visual elements using text prompts. The system has been trained on licensed stock footage and Adobe’s own content library, addressing copyright concerns that have plagued other AI video tools.

Major Studios Embrace AI-Powered Workflows

Netflix has already integrated Adobe’s new tools into production workflows for several unannounced series, according to sources familiar with the company’s post-production operations. The streaming giant is particularly interested in the technology’s ability to generate establishing shots and background elements, reducing location shooting costs.

Paramount’s visual effects supervisor Sarah Chen recently spoke at the NAB Show about implementing AI tools for crowd replication and environment extensions. “We’re seeing 70% time savings on shots that previously required extensive green screen work and manual compositing,” Chen explained during a technical presentation.

The tools are proving especially valuable for episodic television, where tight schedules and budget constraints have always pressured post-production teams. Disney’s television division has reportedly begun pilot testing Adobe’s AI rotoscoping features, which can automatically isolate subjects from backgrounds with minimal manual cleanup required.

Warner Bros Discovery has integrated the technology into several ongoing productions, focusing on automated color matching and scene extension capabilities. The studio’s technical teams report that AI-generated sky replacements and time-of-day adjustments are now handled entirely through automated processes, freeing artists to focus on more creative tasks.

Technical Capabilities Reshaping Post-Production

Adobe’s generative fill technology for video works similarly to its popular Photoshop feature but handles moving images and maintains temporal consistency across frames. Editors can select areas of video and describe desired changes through text prompts, with the AI generating photorealistic results that match the original footage’s lighting, color, and motion characteristics.

The automated rotoscoping feature uses machine learning to track and isolate moving subjects throughout entire sequences. Traditional rotoscoping requires frame-by-frame manual work that can take days for complex shots. Adobe’s AI version handles most sequences automatically, requiring only minor artist corrections.

Object removal capabilities have advanced significantly, allowing editors to eliminate unwanted elements like boom microphones, safety equipment, or background distractions without visible artifacts. The system analyzes surrounding pixels and generates convincing replacements based on scene context.

Scene extension tools can expand the edges of frames, creating wider shots from existing footage or extending backgrounds beyond their original boundaries. This feature has proven particularly useful for streaming content, where different aspect ratios are required for various platforms and devices.

Color matching and style transfer functions automatically adjust footage to match established visual styles or reference materials. Directors can now achieve consistent looks across scenes shot at different times or locations without extensive manual color grading work.

Industry Response and Adoption Challenges

The Motion Picture Editors Guild has issued guidelines for AI tool usage, emphasizing transparency in AI-assisted editing and maintaining editorial decision-making authority with human editors. Union representatives stress that these tools should enhance rather than replace creative professionals.

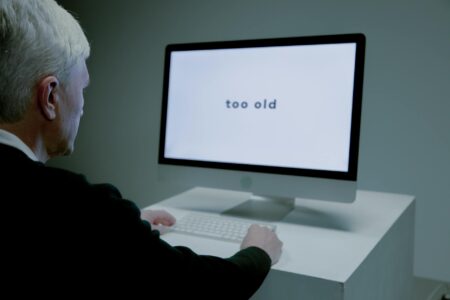

Some veteran editors express concern about over-reliance on automated solutions potentially diminishing artistic skills within the industry. However, early adopters report that AI tools actually free them to focus on storytelling and creative decisions rather than technical busy work.

Quality control remains a significant consideration. While AI-generated content has improved dramatically, it still requires human oversight to ensure consistency with project vision and technical standards. Studios are developing new review processes specifically for AI-assisted content.

Training costs represent another adoption hurdle. Technical teams need education on prompt engineering, AI workflow integration, and quality assessment techniques. Adobe has launched certification programs to address these skill gaps, partnering with film schools and professional organizations.

Similar to Microsoft’s AI integration transforming Excel workflows, Adobe’s video tools are fundamentally changing how creative professionals approach their daily tasks, requiring new skill sets while dramatically increasing productivity.

Economic Impact on Production Budgets

Budget allocation for post-production is shifting significantly as AI tools reduce labor-intensive tasks. Studios report reallocating funds from routine technical work toward creative development, additional shooting days, or enhanced production values in other areas.

Independent filmmakers and smaller production companies gain access to capabilities previously available only to major studios with large VFX budgets. This democratization effect mirrors broader trends in creative technology, where professional tools become accessible to smaller operators.

International co-productions benefit particularly from AI-assisted workflows, as teams can work more efficiently across time zones and language barriers. Automated tools reduce the need for extensive back-and-forth communication about technical specifications and revisions.

The technology’s impact extends beyond Hollywood to corporate video production, advertising, and digital content creation. Marketing agencies report using Adobe’s AI tools for rapid campaign development and A/B testing of different creative approaches.

Adobe’s aggressive push into AI-powered creative tools positions the company to capture more of the expanding digital content market. As streaming platforms increase content production and social media demands more video content, these efficiency gains become increasingly valuable across the entire entertainment ecosystem.

The integration of AI into mainstream video editing workflows represents a permanent shift in how visual content is created. While human creativity remains essential for storytelling and artistic vision, the technical barriers to bringing those visions to life continue to diminish. As studios refine their AI-assisted workflows and new generations of editors learn to leverage these capabilities, the entertainment industry is poised for its next major technological evolution.

Frequently Asked Questions

How are Hollywood studios using Adobe’s AI video editing tools?

Major studios like Netflix and Paramount are using Adobe’s AI tools for automated rotoscoping, scene extension, object removal, and generating background elements to reduce production timelines.

Do Adobe’s AI video editing tools replace human editors?

No, the tools enhance human creativity by automating technical tasks, allowing editors to focus on storytelling and creative decisions rather than replacing their roles entirely.